- Spark Intelligence

- Posts

- Spark Intelligence #40: The agent era and what your agency should do about it

Spark Intelligence #40: The agent era and what your agency should do about it

The AI brief for creative leaders to grow your business and career, by Spark AI

👋 Greetings earthlings,

Emma here. WPP is calling them ‘operating systems’. Anthropic just launched Agent Teams, where multiple AI agents coordinate tasks without human oversight. And somewhere on the internet, AI bots are running their own social network – posting, debating, forming communities, no humans involved (more on that shortly). Welcome to the week AI agents went mainstream. Or did they?

Because here’s the thing – what one company calls an 'agent,' another calls a chatbot with a nice interface. The smoke-and-mirrors quotient is high. But underneath the theatre, the reality is that agents are arriving faster than most agencies' security policies can keep up with.

This week: what agents actually are, where they genuinely are today, why the most popular one has security researchers losing sleep, and what you should be asking your team before anyone connects anything to your systems.

Let’s jump in:

1. OpenClaw: the viral AI agent that should make you nervous

Let's start with a simple definition. When we say "AI agent", we mean software that doesn't just answer questions but takes actions on your behalf. Like read your emails, check your calendar, send messages, browse the web, and make decisions about what to do next without you prompting it every step of the way. A chatbot waits for you to ask and an agent gets on with it.

OpenClaw is an open-source AI agent, one of the first to go properly mainstream. It's free software that runs locally on your computer and then carries out tasks autonomously. If you're on LinkedIn you've almost certainly seen people either raving about it or scared witless by it. If you're not, there's a decent chance someone on your team has.

I asked one of our Spark AI coaches, the formidably astute Emma Jackson (better known as EJ), to dig into this properly. Here's her verdict:

“If your CTO hasn't been sleeping well, it's probably because of OpenClaw. If you don't have a CTO or a well thought-out AI policy, you might want to revise the quality of your sleep, too!

OpenClaw is an open-source AI agent that runs locally on your computer, connects to your apps like WhatsApp, Slack, email, and calendars, and (here’s the important bit) can act autonomously following your instructions. Want to retrieve all the contact data in your CRM, draft a personalised message to everyone, and then send the emails? Done. Read last week’s newsletter, write five LinkedIn posts, and schedule them to go out over the week - also done.

It's free, going viral, and at first glance promises what we've hoped AI would always be about: getting on and doing the boring tasks whilst you focus on the higher value, more interesting stuff.

“That sounds great!” you might be thinking. But all that power comes with a price, and the price is security, or rather the lack of it. Security researcher Simon Willison talks about the "lethal trifecta" that makes AI agents vulnerable by design: access to private data (i.e. everything on your computer or internal network), exposure to untrusted content (i.e. everything on the internet, or emails arriving in your Inbox), and ability to take external actions (e.g. send an email, or fill out a web form). OpenClaw has all three by design. It’s what makes it powerful and useful, but also what makes it extremely dangerous. It reads an email that tells it to log into your password manager and send all your data in it to a hacker? Uh oh. If that doesn’t have you worried, it should.

Security researchers have already discovered 17,500 exposed installations of OpenClaw; 93.4% had authentication bypasses. And 12% of the OpenClaw skills marketplace turns out to be malware disguised as legitimate tools. OpenClaw's own documentation admits: "There is no 'perfectly secure' setup."

Can you use it safely? Technically yes. But only if you're comfortable hardening Docker containers, whitelisting network access, sandboxing tool execution, and then paying the bill as it burns through your Claude tokens. And even then, prompt injection remains unsolved across all AI agents.

Does it mean we can just ignore OpenClaw? While it's not safe enough to be a mainstream tool for anybody, it clearly points in the direction that agentic AI is going. How long will it be before more secure versions of OpenClaw, released by Microsoft or Anthropic, are suitable for general business use? Who knows, but I can guarantee you they're working hard at it. Claude Cowork is an early version of this, with limited functionality to allow it to remain relatively secure (even so, be careful!). You can be sure Anthropic will be building this out over the rest of 2026.”

At Spark we call it "tool anxiety" - the pressure to adopt before you fully understand. OpenClaw proves the capability is real. It also proves we've still got some way to go.

In the meantime, here's a starter for ten of your AI agent checklist:

Your AI agent checklist: five questions for your AI Taskforce

If anyone on your team is using (or asking about) AI agents, here are five questions worth answering before they connect anything to company systems. Hand it to your Ops Director and have the conversation this week.

What can it access? List every system the agent connects to. Email, calendar, Slack, client files. If you can't list them, you don't have enough visibility.

What can it do? There's a big difference between "read my calendar" and "send emails on my behalf." Restrict permissions to the minimum needed. Read-only is your friend until you're confident.

Who sees what it sees? If an agent reads your client emails, that data is being processed by a third-party model. Check whether that's compatible with your NDAs and data processing agreements.

What's your rollback plan? If an agent sends a wrong email, posts something odd, or leaks sensitive information, how quickly can you undo it? If the answer is "we'd figure it out," that's not a plan.

Is there a human in the loop? The safest agents are the ones that draft but don't send, suggest but don't act. Start there. Autonomy is something you grant gradually, not by default.

None of this means "don't use agents." It means use them with the same rigour you'd apply to any new hire who has access to your client data and your company email. If you want support building your AI policy, that's one of the things we help agencies with – just reply to this email.

Yes, the names are ridiculous. OpenClaw, Moltbook, Moltbot. It all sounds like a rejected Pixar franchise. But stick with me, because underneath the lobster branding there's something worth understanding.

Moltbook is an agent-only Reddit-style social network created by Matt Schlicht (CEO of Octane AI), who used his own OpenClaw agent to build the platform. The agents that post on Moltbook are primarily OpenClaw agents, the same software we just discussed above, now given a public space to interact with each other. Humans can watch but can't participate.

It launched in late January and within days claimed 1.5 million registered agents and over 100,000 posts. It's built on OpenClaw, which makes the security conversation above even more pointed.

There's legitimate scepticism about how autonomous any of this actually is - MIT Technology Review called the whole thing "peak AI theatre", noting the bots are simply mimicking what humans do on social media. (For what it's worth, the agents also started their own religion called Crustafarianism. Make of that what you will.)

Here's why this matters even if Moltbook itself turns out to be a sideshow. The infrastructure for AI agents to browse, research, compare and recommend exists right now. If agents increasingly act on behalf of consumers – finding providers, shortlisting options, making purchasing decisions – then how your clients' brands show up to AI models becomes as important as how they show up on Google. BCG mapped out three new forms of ad inventory emerging in AI environments this week (linked below): in-answer, in-conversation, and agentic ads. We're not there yet. But Moltbook shows how quickly the plumbing is being laid, much faster than human companies can react.

So what should you do about this?

Get your house in order. If you don't have a policy for when team members connect AI agents to company systems, that's job one. Get your ops lead and your most AI-curious team member in a room and draft something simple. The checklist above is a starting point. It doesn't need to be perfect. It needs to exist and your team need to know about it.

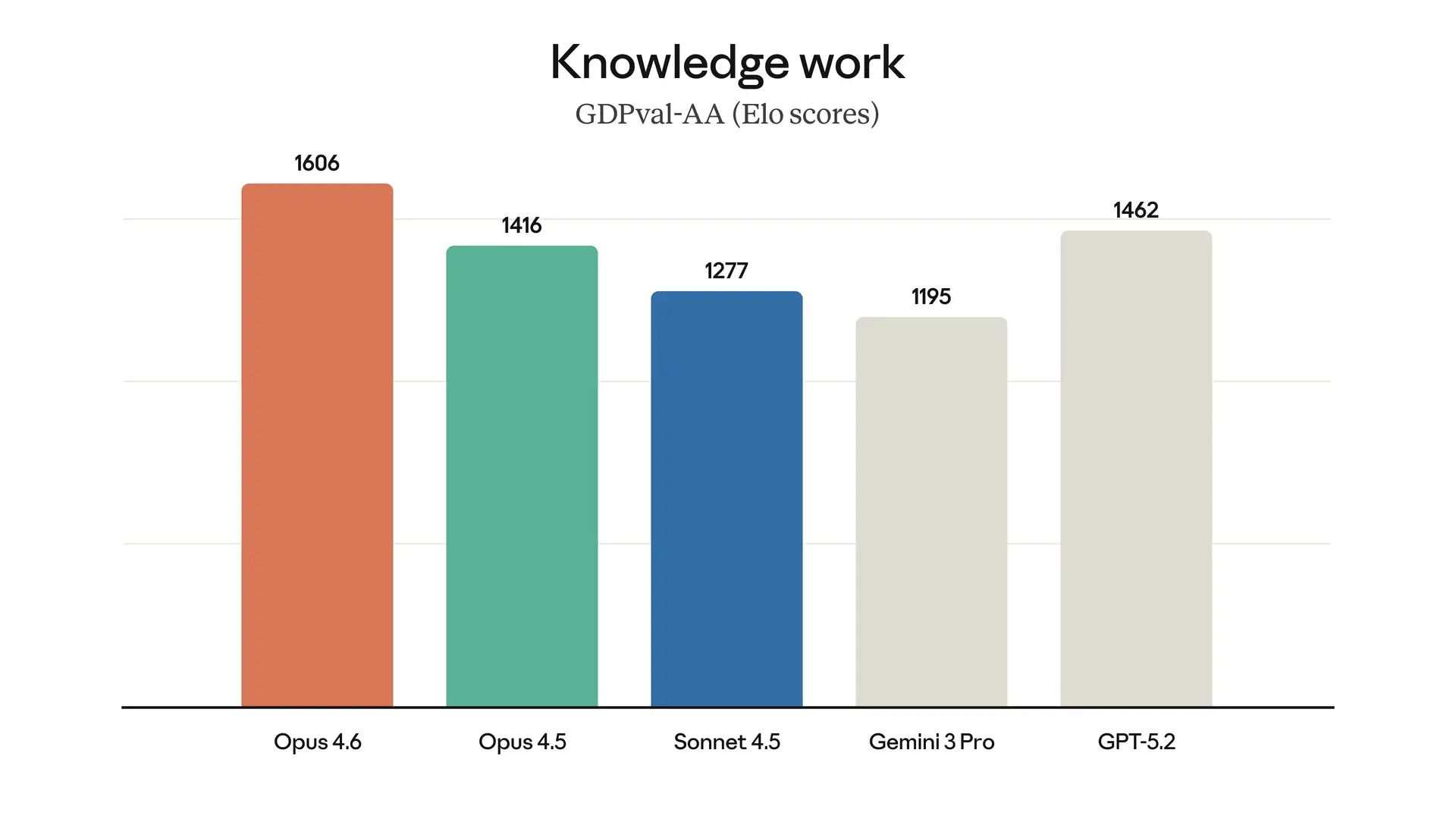

3. Claude Opus 4.6: the best model right now?

So far this edition has been about what can go wrong. But the same week that OpenClaw was keeping security researchers up at night, the tools we actually use got quietly, meaningfully better. That's the real story of where AI is right now – the gap between the frontier and the safe ground is narrowing.

While we're on the subject of AI getting more capable, Anthropic released Claude Opus 4.6 this week. And I can tell you firsthand that it's a noticeable step up, because I used it to help me draft this newsletter.

The drafts came back much closer to the bar, it followed instructions I'd previously had to repeat, and picked up connections between stories without being told. It felt less like editing an AI draft and more like working with a colleague who'd properly read the brief, context and audience – all of which lives in the master instructions.

Rather than just telling you it's better, let me show you. Here's how this newsletter actually gets made – and where the upgrade showed up.

Step 1: The Project holds our playbook. Brand guidelines, tone of voice, past editions, our core points of view, our ICP profile, and a master set of newsletter instructions that we've refined again and again over the past year – all live permanently in a Claude Project. Claude has read them all before we start.

Step 2: I come with a point of view and a pile of stuff. I know the thesis I want this edition to argue and which stories matter. Some sections arrive pre-written by me or guest writers on the team (like EJ's OpenClaw piece above). The rest is as messy as the inside of my brain: raw text, uploaded documents, links for Claude to fetch, and thoughts dictated through Wispr Flow while they're still fresh. I can see the connections between all of it – that's the easy part. The hard part is writing those connections up clearly, and if I were a full-time newsletter writer I'd relish that. But I need to spend the majority of my time running Spark, so Claude's job is to take what's in my head and help me get it onto the page.

Step 3: We build it together. Claude helps me structure, draft, and research based on the Project instructions, which keeps every edition consistent. We go back and forth on tone, flow, and whether each section earns its place. I should be honest: I'm dyslexic, and this is where AI has been transformative for me personally. I can get my thinking down fast without worrying about spelling, structure, or whether a sentence has run away from itself. Claude catches what I'd miss and helps me say what I mean more clearly. With 4.6, the first drafts needed less reworking. It threaded the OpenClaw security story into the Moltbook section without me spelling out the connection. Previous versions would have treated them as separate pieces.

Step 4: I check it against our ICP. I've built an AI assistant based on our ideal reader profile. I run the draft past it and ask: would you find this helpful? What's missing? What feels off? It gives fierce feedback and catches things I'd miss on my own. This time, 4.6 had already flagged what would matter most to our ICP without being prompted – it was thinking about the reader, not just the content. (Nothing beats hearing directly from you though – hit reply if you've got fierce feedback of your own.)

Step 5: I edit and finesse. The final pass is always mine – and it's never just one pass. I'll edit on the day I've pulled it all together, then look at it again a day or two later with fresh eyes, then again on Sunday night before it goes out. Each time I'm checking different things: voice, judgement calls, what to cut, what to push harder on, what questions would be left hanging if I didn't answer them. Then Jules does the final scrub, usually on that same Sunday night, the lucky thing.

My edit pass wasn't shorter this week – but it was different. Less time fixing structure and wording, more time thinking about whether each section would genuinely land for you. That's what a better model actually gives you. The thinking time doesn't shrink. It shifts to where it should have been all along.

4. Two things to read this week

If you're wondering what all this means at industry scale, two pieces this week mapped it out better than anything else I've read.

Ad Age compares the AI operating systems being built by Publicis, Omnicom, WPP, Dentsu, Havas, Horizon Media, and Stagwell. Every major holding company now has one. The question for independent agencies: how do you compete with this level of infrastructure investment? Our view: you can achieve nearly all of what’s possible with their proprietary systems with just your own AI subscription to Gemini, Copilot, Claude or ChatGPT - if you embed it in the way your team work, through AI Assistant powered workflows. Not sure what that means? Read Jules’ book. Then call us.

BCG maps out what they call the new "AI attention stack" where consumer time, intent, and influence are concentrated as people spend less time navigating traditional web funnels and more time inside AI interfaces. Essential reading if you work with brands on media or digital strategy. The advertising landscape hasn't shifted this fundamentally since paid search.

That's it for this week. If there's one thing to take away from all of this, it's simple: you don't need to move fast. You need to move intentionally.

See you soon,

Co-founder, Spark AI

P.S. Our friend Trenton at Team Sterka is running a free Zoon session on influencing clients using four communication styles. It's a senior agency crowd so you'll meet interesting people in your breakout room. Register here.

What did you think of our email today? |

About Spark AI

Spark AI empowers creative and brand leaders turn AI curiosity into confidence through structured training and business transformation.

We have worked with 60+ agencies running AI Fundamentals workshops and AI Accelerator programmes based on our # 1 bestselling book Shift – AI for Agencies.

Trusted by Oxford University Saïd Business School and backed by Innovate UK.